|

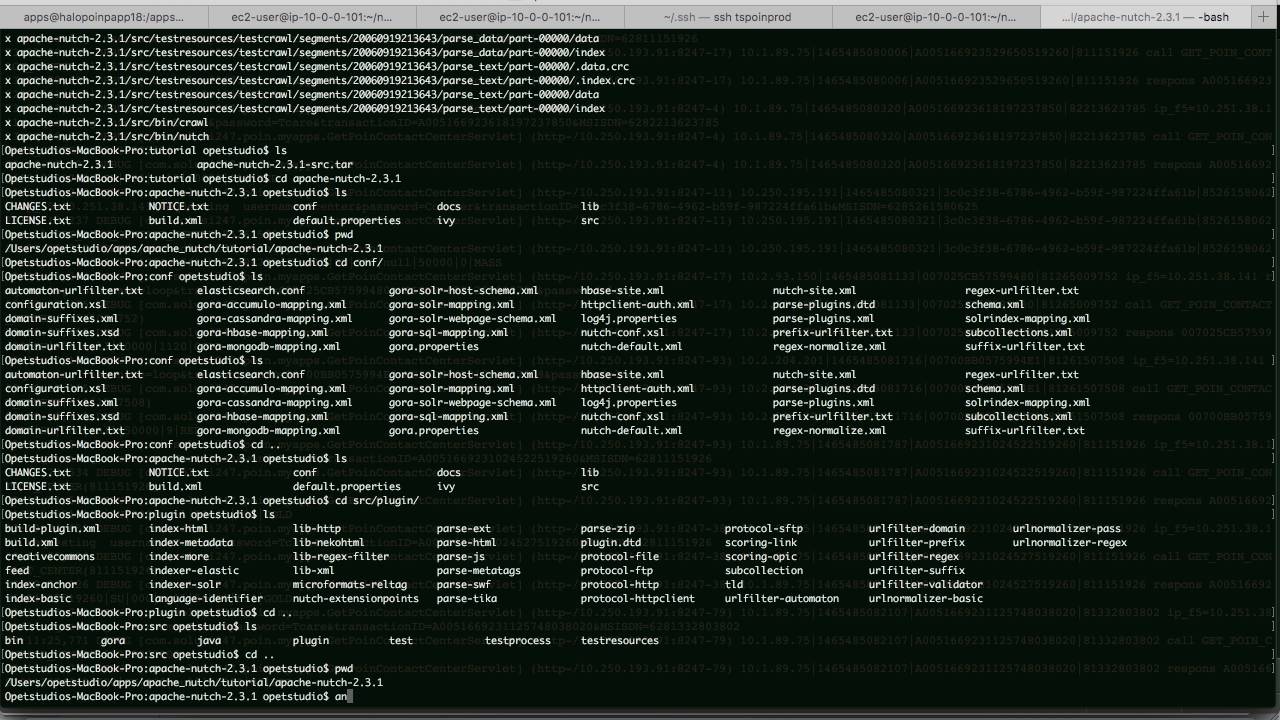

Metadata: Content-Language=en Age=52614 Content-Length=29341 Last-Modified=Sat, 17:27:22 GMT _fst_=33 =20120204004509 Connection=close X-Cache-Lookup=MISS from :80 Server=Apache X-Cache=MISS from X-Content-Type-Options=nosniff Cache-Control=private, s-maxage=0, max-age=0, must-revalidate Vary=Accept-Encoding,Cookie Date=Fri, 15:08:18 GMT Content-Encoding=gzip =1. $ nutch readseg -dump crawl-wiki-ci/segments/20120204004509/ crawl-wiki-ci/stats/segments $ nutch readdb crawl-wiki-ci/crawldb/ -topN 10 crawl-wiki-ci/stats/top10/ Retry interval: 2592000 seconds (30 days) $ nutch readdb crawl-wiki-ci/crawldb/ -dump crawl-wiki-ci/stats $ nutch readdb crawl-wiki-ci/crawldb/ -statsĬrawlDb statistics start: crawl-wiki-ci/crawldb/Statistics for CrawlDb: crawl-wiki-ci/crawldb/ $ bin/nutch crawl urls -dir crawl-wiki-ci -depth 2 Here we are try to crawl and sublinks in the same domain. The web crawler gives you hands-free indexing, with easily configurable settings so you can schedule, automate, and sync all the content you choose. Unzip your binary Nutch package to $HOME/nutch-1.3įrom now on, we are going to use $ to refer to the current directory. Getting hands dirt with Nutch Setup Nutch from binary distribution If you are interested in deployed mode read here. This gives you the benefit of a distributed file system (HDFS) and MapReduce processing style. This may suit you fine if you have a small site to crawl and index, but most people choose Nutch because of its capability to run on in deploy mode, within a Hadoop cluster. running Nutch in a single process on one machine, then we use Hadoop as a dependency. By default, Nutch no longer comes with a Hadoop distribution, however when run in local mode e.g. crawl_parse contains the outlink urls, used to update the crawldbĪs of the official Nutch 1.3 release the source code architecture has been greatly simplified to allow us to run Nutch in one of two modes namely local and deploy.parse_data contains outlinks and metadata parsed from each url.parse_text contains the parsed text of each url.Latest Lucene Core News Apache Lucene 8.11.0 available (16.Nov) Apache Lucene 8.10.1 available (18. The PyLucene sub project provides Python bindings for Lucene Core. content contains the raw content retrieved from each url Lucene Core is a Java library providing powerful indexing and search features, as well as spellchecking, hit highlighting and advanced analysis/tokenization capabilities.crawl_fetch contains the status of fetching each url.crawl_generate names a set of urls to be fetche.Scrapy is not Apache Solr, Elasticsearch, or Lucene in other words, it has nothing. Segments are directories with the following subdirectories: Scrapy is not Apache Nutch, that is, its not a generic web crawler. Each segment is a set of urls that are fetched as a unit. This contains the list of known links to each url, including both the source url and anchor text of the link. This contains information about every url known to Nutch, including whether it was fetched, and, if so, when. Quality - you can bias the crawling to fetch “important” pages firstįirst you need to know that, Nutch data is composed of: Robust and scalable - you can run Nutch on a cluster of 100 machines

Highly scalable and relatively feature rich crawlerįeatures like politeness which obeys robots.txt rules Some of the advantages of Nutch, when compared to a simple Fetcher

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed